How Will Super Alignment Work? Challenges and Criticisms of OpenAI's Approach to AGI Safety & X-Risk

Timelines are short, p(doom) is high: a global stop to frontier AI development until x-safety consensus is our only reasonable hope — EA Forum

OpenAI's chief scientist thinks humans could one day merge with machines

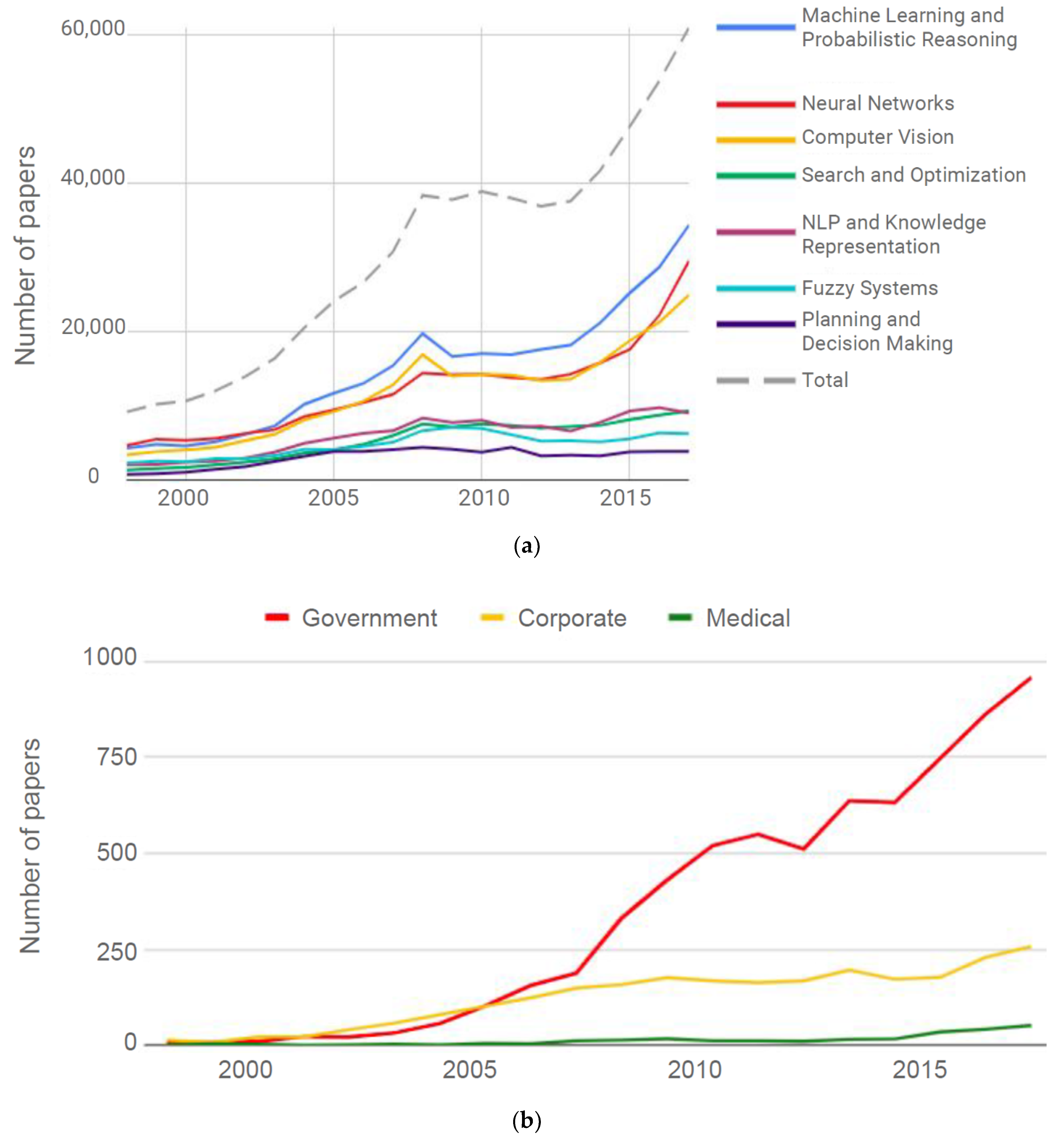

PDF) Advanced AI Governance: A Literature Review of Problems, Options, and Proposals

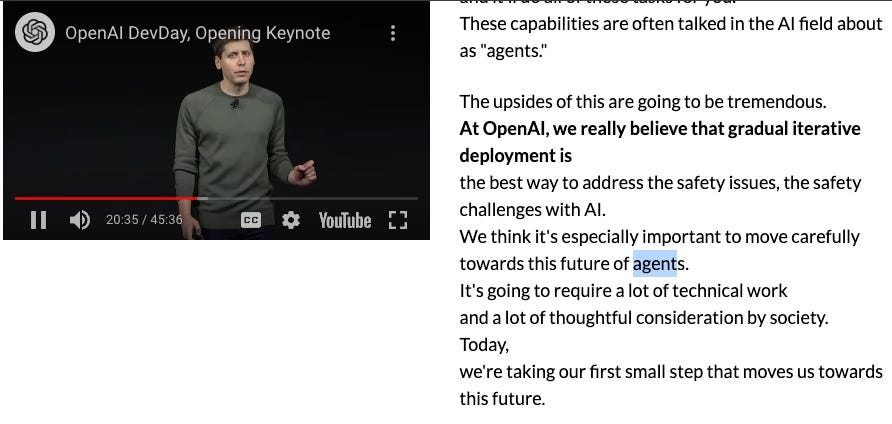

AGI is Being Achieved Incrementally (OpenAI DevDay w/ Simon Willison, Alex Volkov, Jim Fan, Raza Habib, Shreya Rajpal, Rahul Ligma, et al)

Generative AI VIII: AGI Dangers and Perspectives - Synthesis AI

The Surgeon General's Social Media Warning and A.I.'s Existential Risks - The New York Times

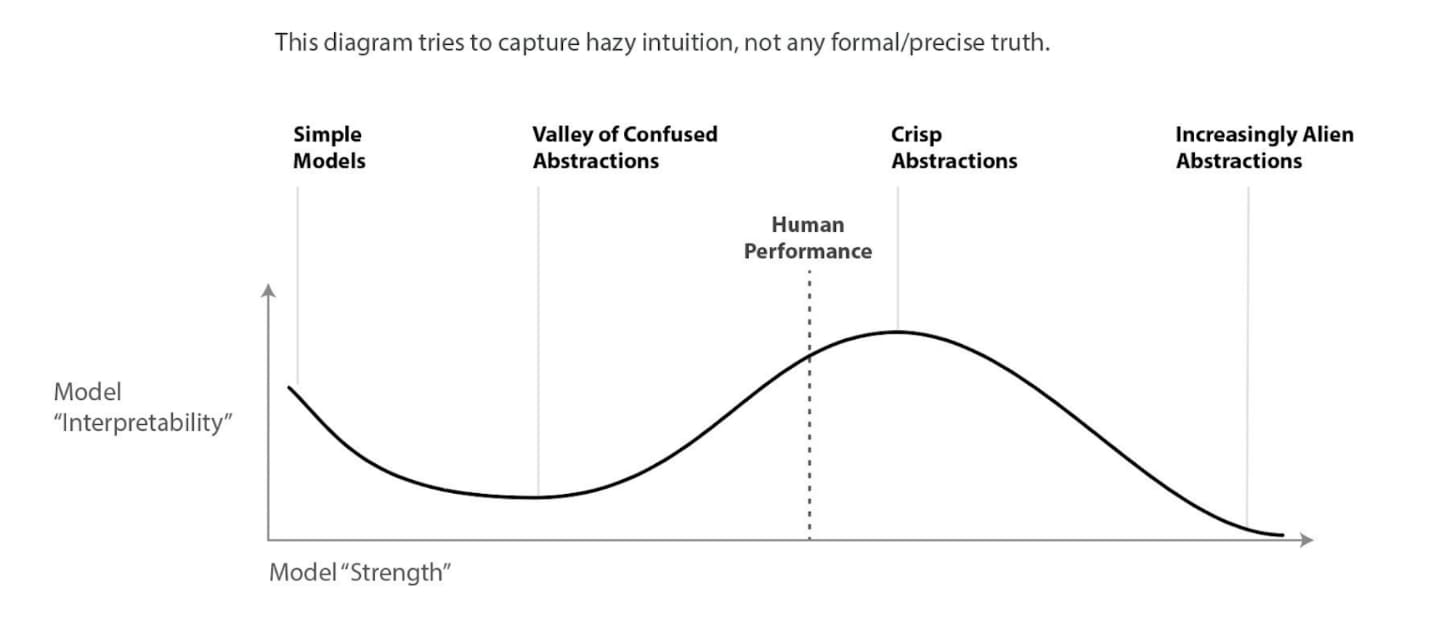

Alignment & Control Problem

My understanding of) What Everyone in Technical Alignment is Doing and Why — AI Alignment Forum

BDCC, Free Full-Text

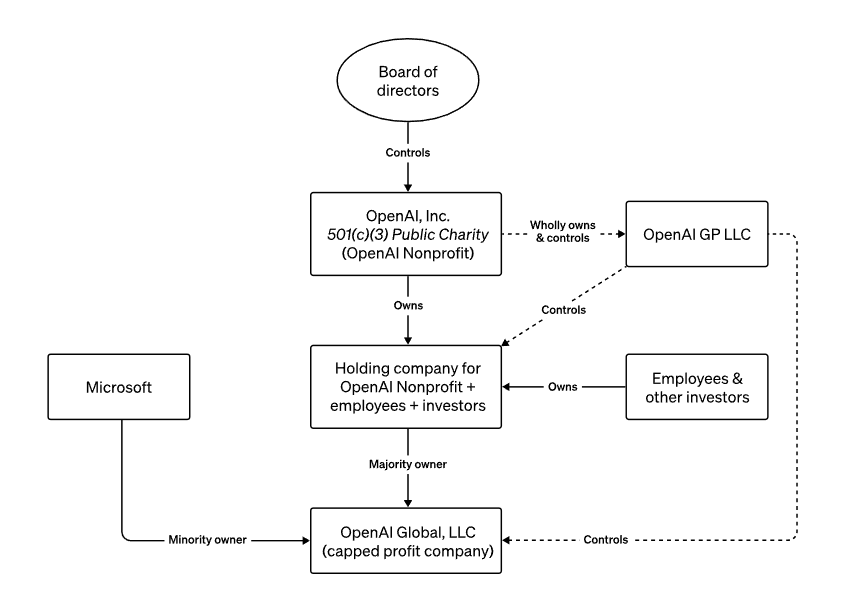

ARTIFICIAL INTELLIGENCE, PROMISE OR PERIL: PART 3 – AI GOVERNANCE AND VENTURE CAPITAL

Our approach to AI safety (OpenAI) : r/singularity

Preventing an AI-related catastrophe - 80,000 Hours

Safety timelines: How long will it take to solve alignment? — LessWrong

OpenAI says superintelligence will arrive this decade, so they're creating the Superalignment team : r/ChatGPT